Explore our diverse programs

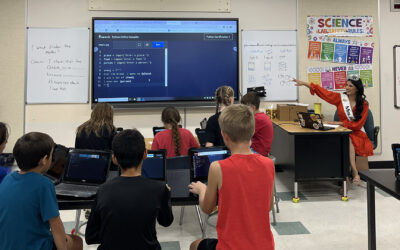

Our programs in computer science support the evolution of the computing and informatics disciplines, and the integration of computer and information sciences with other disciplines such as biology, geography, anthropology, public health, urban planning and mathematics.

The data science, analytics and engineering program focuses on development of new systems and algorithms for collecting, cleaning, storing, valuing, aggregating, fusing, summarizing, managing and drawing inferences from high dimension, high volume, heterogeneous data streams.

Degrees offered: PhD

Our industrial engineering programs concentrate on the design, operation and improvement of the systems required to meet societal needs for products and services. The program combines knowledge from the physical, mathematical and social sciences to help design efficient manufacturing and service systems that integrate people, equipment and information.

The discipline of informatics makes connections between the work people do and technology that can support that work. It combines aspects of software engineering, human-computer interaction, decision theory, organizational behavior and information technology.

Degrees offered: BSE

The artificial intelligence concentration of robotics and autonomous systems focuses on the algorithmic aspects of robotics, including statistical machine learning, computer vision, natural language processing, knowledge retrieval and reasoning, and formal methods of planning.

Degrees offered: MS with artificial intelligence concentration